Remote hiring has gotten faster, more technical, and less forgiving. A candidate might move from a behavioral screen to a coding assessment, then into a live video call where every pause feels heavier than it should. That’s why an AI interview assistant is worth taking seriously right now — not as a magic answer machine, but as a real-time support layer for people who need structure, speed, and calm under pressure.

I looked at Linkjob AI from a practical angle rather than a marketing one. The useful question isn’t simply “Does it use AI?” Almost everything does these days. The better question is: can it listen to interview context, process screen content, help with coding or spoken questions, and stay manageable while the user is already under stress?

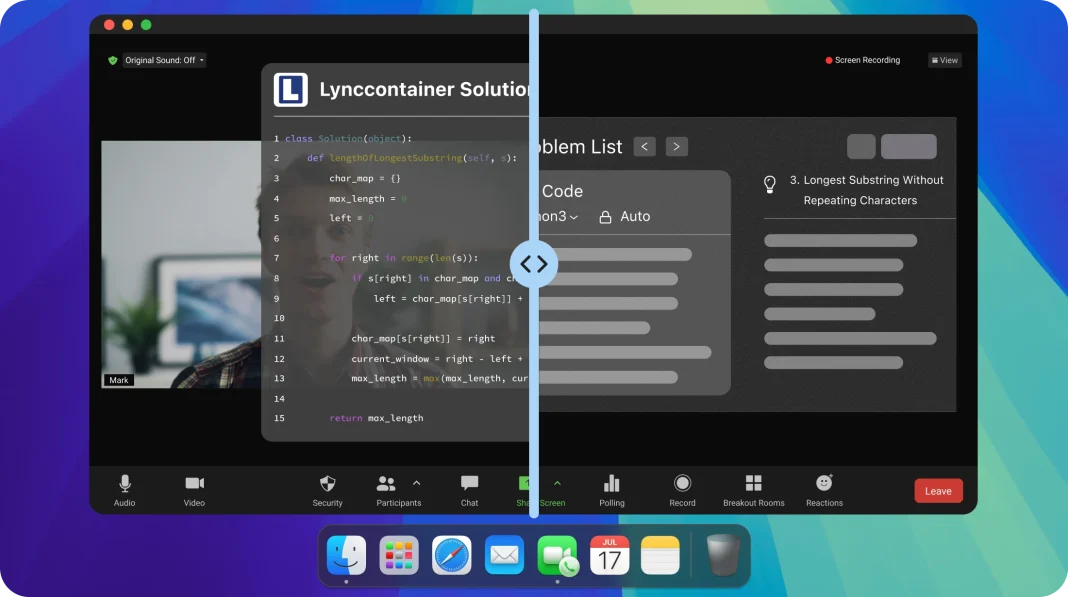

Based on the official product pages, Linkjob AI is a desktop-based real-time interview helper. It supports live interview assistance, mock interview practice, a coding copilot, online quiz support, screenshot-based analysis, customizable AI responses, and a range of interview formats — general, phone, HireVue-style, and coding interviews. It also features a floating desktop interface that works alongside video meeting or testing environments.

That combination makes it more interesting than a generic chatbot. A chatbot waits for typed input. Linkjob AI is designed around the messy reality of interviews: spoken questions, shared screens, coding platforms, tight response windows, and the need to turn raw pressure into organized answers.

Why Real-Time Interview Support Has Become More Valuable

Modern interviews are no longer just conversations. They routinely blend audio, screen sharing, browser-based assessments, coding editors, and behavioral storytelling — which creates a specific problem: candidates may know the material but still struggle to organize a response quickly enough in the moment.

That’s the gap Linkjob AI is built for. It’s designed to help users react to interview questions in real time, especially when a question arrives verbally or appears on screen. The official site highlights support for interview questions, coding assessments, online problem-solving, smart screenshots, and AI mock interview practice.

In evaluating this kind of tool, the right test isn’t whether it can produce polished paragraphs in isolation — that’s too easy. The stronger test is whether it can assist across different pressure patterns: a behavioral prompt, a coding problem, a screen-based task, and a live environment where the user can’t afford to break their flow.

Testing Linkjob AI Through Real Interview Scenarios

A fair review needs scenarios, not slogans. Here’s how Linkjob AI holds up across four realistic situations.

Behavioral Questions Need Structure More Than Polish

Take a common behavioral question: “Tell me about a time you handled conflict.” The difficulty isn’t language alone. The real challenge is choosing the right story, keeping it concise, and making the answer feel credible rather than rehearsed.

Here, Linkjob AI’s value is in delivering answer guidance quickly — ideally helping the user build a clean structure: situation, action, result, reflection. If the output is too generic, the candidate still needs to layer in real details. That’s a limitation worth naming clearly. AI can organize the frame, but it can’t invent an authentic work history without the answer becoming hollow or risky.

Coding Interviews Depend on Screen Context

This is where Linkjob AI’s screenshot and coding copilot features matter most. The official pages describe support for coding assessments and smart screenshots, and the FAQ explains that clicking the screenshot button sends the captured image to the AI together with the next audio segment.

That’s meaningfully more useful than manually copying a problem into a separate chatbot. Coding platforms often include constraints, examples, function signatures, and edge cases — the screen itself is part of the question. A tool that can work with both spoken context and captured screen content reduces friction between seeing a problem and finding a starting point.

The advantage isn’t that every answer becomes perfect. It’s faster orientation. A user might need help identifying whether a problem calls for a two-pointer approach, dynamic programming, graph traversal, or a hash map. In that moment, a short structured hint is more valuable than a long finished solution.

Mock Interviews Reveal Weak Spots Before Pressure Hits

Many candidates fail not from lack of knowledge, but from poor timing, vague storytelling, or weak explanation habits. Mock interview practice addresses exactly that.

Used in a preparation setting, Linkjob AI functions less like a shortcut and more like a coach. Candidates can rehearse answers, check whether explanations run too long, and practice translating scattered experience into interview-ready language. It’s also the right time to refine prompts and response preferences before relying on the assistant in a higher-stakes situation.

How Linkjob AI Works in Practice

The product is desktop-first — not a browser-only writing assistant. It’s built around installing an app, opening a control interface, configuring the assistant, and using real-time support during interviews or preparation sessions.

Step 1: Download the desktop app. The official site provides download access. A desktop-based assistant is better suited to sitting across video apps, browsers, coding platforms, and assessment pages than a web chatbot that requires constant tab switching.

Step 2: Open the settings panel. The FAQ describes using the gear button in the control box to access settings, where the assistant can be customized. This step matters because different interviews call for different response styles — a behavioral answer shouldn’t sound like a coding explanation, and vice versa.

Step 3: Use audio and screenshot assistance. During live sessions, Linkjob AI can listen to interview audio and work with captured screenshots together. One practical boundary worth noting: scrolling screenshots aren’t supported, so longer questions may require multiple captures.

Step 4: Apply human judgment to the output. This isn’t a button — but it’s necessary. AI output should be treated as guidance, not a script. The candidate still needs to filter the response, connect it to personal experience, and make sure the answer fits what the interviewer actually asked.

Where Linkjob AI Feels Strongest — and Where It Falls Short

The product is at its best when a candidate needs fast structure from messy input: live verbal questions, coding prompts on screen, online assessment tasks, mock interview rehearsal.

It’s less suited to users who expect one-click perfection. Complex coding problems still require careful reasoning. Behavioral answers still need personal detail. Long screen prompts may need multiple captures. The FAQ also notes that response speed can vary depending on the model, audio transcription, server load, network conditions, and answer length — all of which are worth setting realistic expectations around.

Evaluation Area Practical Fit What to Expect Live interview support Strong Helpful structure, not guaranteed perfect answers Coding assessments Useful when screen context matters Best for orientation and pattern recognition Mock interview practice Strong Good for refining flow and building confidence Setup learning curve Moderate and manageable Configure before serious use Creative control Better with custom prompts Output quality depends on user direction Stability Practical but variable Speed and results vary by model and context Who Should Consider Linkjob AI

Linkjob AI is most relevant for candidates preparing for technical interviews, remote hiring loops, coding tests, and structured behavioral interviews. It’s also useful for people who freeze under pressure, lose track of answer structure, or need help translating knowledge into clear spoken responses.

It may be especially valuable for candidates moving through multi-stage hiring processes — a software engineer preparing for coding rounds, a finance candidate rehearsing concise analytical answers, or a non-native English speaker looking to reduce hesitation and organize ideas more clearly in real time.

It’s less suitable for users who haven’t prepared at all and expect the assistant to carry the interview. That approach tends to produce shallow answers. The stronger use case is preparation combined with real-time support: know the material, configure the assistant, practice with it, and use it to stay organized when things move fast.

The Real Value: Calmer Execution Under Pressure

Linkjob AI isn’t just another AI writing tool with interview branding. What sets it apart is the combination of a desktop workflow, real-time assistance, coding support, smart screenshots, mock practice, and customizable responses — pieces that fit together because modern interviews are fragmented across voice, video, browser screens, and coding environments.

The most honest verdict: Linkjob AI works best as a pressure-management layer for candidates who are already committed to improving. It helps users respond faster, structure answers more clearly, and handle screen-based tasks with less friction. It doesn’t replace preparation, and results will vary based on prompt quality, question complexity, and live context.

For users who treat it as both a preparation companion and a real-time guide, the product has a clear role: turning interviews from chaotic performances into something more testable — listen, capture, interpret, structure, respond, adjust.